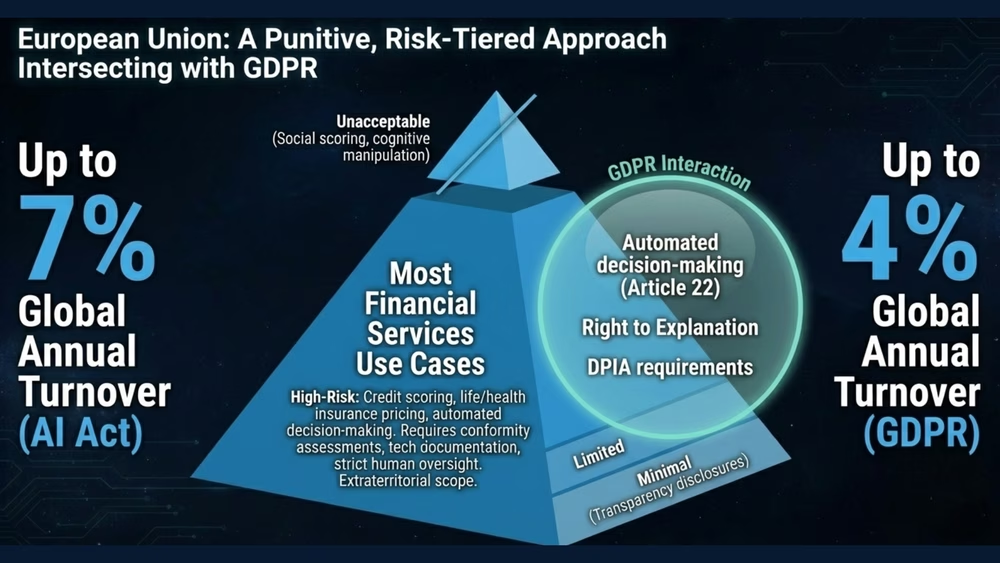

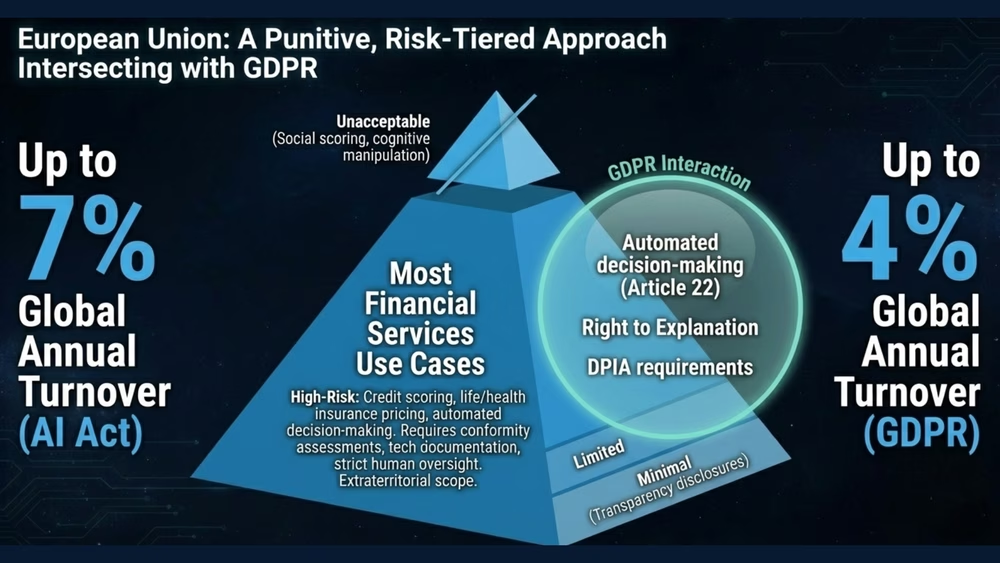

Four-tier risk classification with significant penalties. Most financial institution AI use cases fall into the high-risk category.

← Back to Regulatory Landscape

The EU AI Act is the world's first comprehensive AI legislation. Entered into force on August 1, 2024, it establishes a risk-based regulatory framework with phased implementation running through August 2027. For financial institutions, the most consequential provisions are the high-risk requirements that take full effect in August 2026.

Read the detailed EU AI Act guide arrow_forward

The AI Act establishes four tiers of risk, each with different regulatory obligations:

The AI Act explicitly classifies the following financial services applications as high-risk:

In practice, the majority of AI systems deployed by financial institutions — from customer-facing chatbots making product recommendations to internal risk models — will require careful assessment against the high-risk classification criteria.

The enforcement regime is substantial and designed to ensure compliance:

The GDPR intersects with AI governance at every point where AI processes personal data — which in financial services means virtually every AI use case. The two frameworks create overlapping but distinct obligations that must be addressed holistically.

GDPR Article 22 gives individuals the right not to be subject to decisions based solely on automated processing that produce legal effects or similarly significant effects. For financial institutions, this creates specific obligations around credit decisions, insurance underwriting, and fraud detection that must be reconciled with AI Act requirements.

DPIAs are required under GDPR for high-risk processing activities. AI-driven financial services applications almost universally trigger DPIA requirements, and these assessments must now be coordinated with the AI Act's conformity assessment requirements to avoid duplication and ensure consistency.

GDPR's requirements for meaningful information about the logic involved in automated decisions dovetail with the AI Act's transparency and explainability requirements. Financial institutions must develop explanation capabilities that satisfy both frameworks simultaneously.

Corvair's composable lens architecture and SCAR scoring methodology map directly to the EU AI Act's fairness and explainability requirements. Each governance lens generates auditable, structured evidence that can be presented to EU regulators in the format they expect, while simultaneously satisfying MAS AIRG and other jurisdictional requirements.

The result is a single governance architecture that produces EU-compliant documentation as a natural output of ongoing AI operations, rather than a separate compliance exercise conducted after the fact.

Understand how high-risk classification applies to your AI systems and what you need to do before August 2026.

Schedule a Briefing