The most comprehensive AI governance framework in Asia-Pacific — and the most urgent compliance deadline.

← Back to Regulatory Landscape

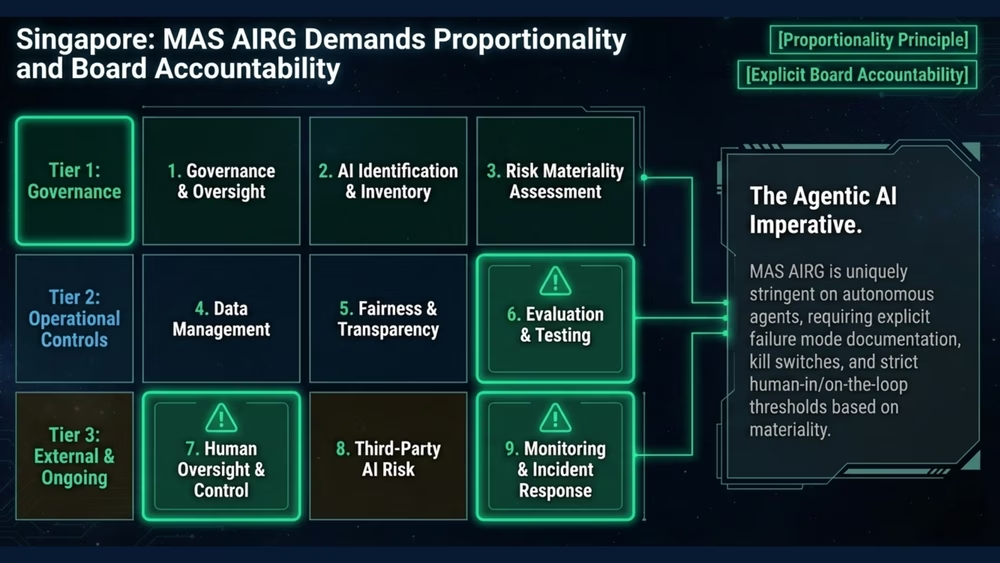

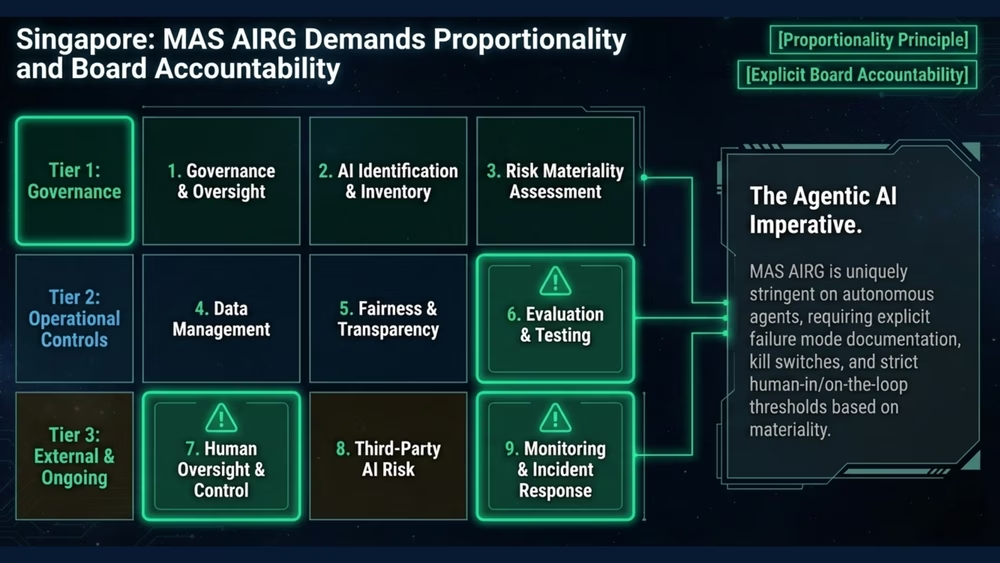

The Monetary Authority of Singapore's Artificial Intelligence Risk Governance (AIRG) guidelines represent the most comprehensive supervisory framework for AI in financial services globally. They set clear expectations across nine governance domains and explicitly address the risks posed by agentic AI systems.

Read the detailed MAS AIRG guide arrow_forward

Consultation closed January 2026. Final guidelines expected mid-2026.

12 months from issuance (estimated mid-2027).

All MAS-regulated financial institutions.

MAS supervisory expectations; non-compliance triggers thematic reviews, enhanced reporting, deployment restrictions.

Each domain establishes specific supervisory expectations that financial institutions must address in their AI governance frameworks.

| # | Domain | What Financial Institutions Must Do |

|---|---|---|

| 1 | Governance & Oversight | Establish board-level accountability for AI risk, define roles and responsibilities, and implement governance structures proportionate to AI materiality. |

| 2 | AI Identification & Inventory | Maintain a comprehensive inventory of all AI systems, including third-party and embedded AI, with clear classification of risk materiality. |

| 3 | Risk Materiality Assessment | Assess the materiality of each AI system based on impact, autonomy, and scope, then apply proportionate governance controls. |

| 4 | Data Management | Ensure data quality, lineage, and governance for all AI inputs, including validation of training data and ongoing monitoring of data drift. |

| 5 | Fairness & Transparency | Implement processes to detect and mitigate bias, provide appropriate explanations of AI decisions to affected stakeholders. |

| 6 | Evaluation & Testing | Conduct rigorous pre-deployment testing including adversarial testing, stress testing, and validation against intended use cases. |

| 7 | Human Oversight & Control | Maintain meaningful human oversight proportionate to AI autonomy, with clear escalation paths and the ability to override or shut down AI systems. |

| 8 | Third-Party AI Risk | Apply due diligence and ongoing monitoring to all third-party AI providers, including model vendors, API services, and embedded AI in enterprise software. |

| 9 | Monitoring & Incident Response | Implement continuous monitoring of AI system performance, drift detection, and incident response procedures with defined escalation protocols. |

The AIRG explicitly identifies AI agents as introducing heightened risk. It recognises that agents with tool access can autonomously execute actions with real-world consequences beyond those of traditional predictive models. This is the domain where most financial institutions have the least internal capability, and where Corvair's methodology provides the most differentiated value.

The challenges are compounded by the speed at which agentic AI is being adopted. Financial institutions deploying AI agents for trade execution, client servicing, fraud detection, and operational automation face governance requirements that cannot be addressed by extending existing model risk management frameworks alone.

Published in January 2026 at the World Economic Forum, the Infocomm Media Development Authority's Agentic AI Governance Framework establishes the baseline expectation for responsible agentic AI deployment in Singapore. While voluntary, it complements the AIRG by providing practical guidance on agentic AI risk management, multi-agent orchestration, and human-agent interaction patterns.

Financial institutions should treat the IMDA framework as a leading indicator of future regulatory expectations, particularly for agentic deployments that extend beyond traditional model boundaries.

Singapore's Personal Data Protection Act (PDPA) intersects with AI governance wherever AI systems process personal data — which in financial services is nearly every use case. The PDPC has issued AI-specific advisory guidelines that clarify obligations for transparency, consent, and accountability in automated decision-making.

Additionally, SS 714:2025, Singapore's national standard for AI governance, provides a certifiable framework that organisations can adopt to demonstrate compliance maturity. Together, the PDPA, IMDA framework, and SS 714 create a layered national ecosystem that complements and extends the MAS AIRG for regulated financial institutions.

Understand what the nine domains mean for your institution and build a governance architecture that satisfies MAS expectations.

Schedule a Briefing