The Problem

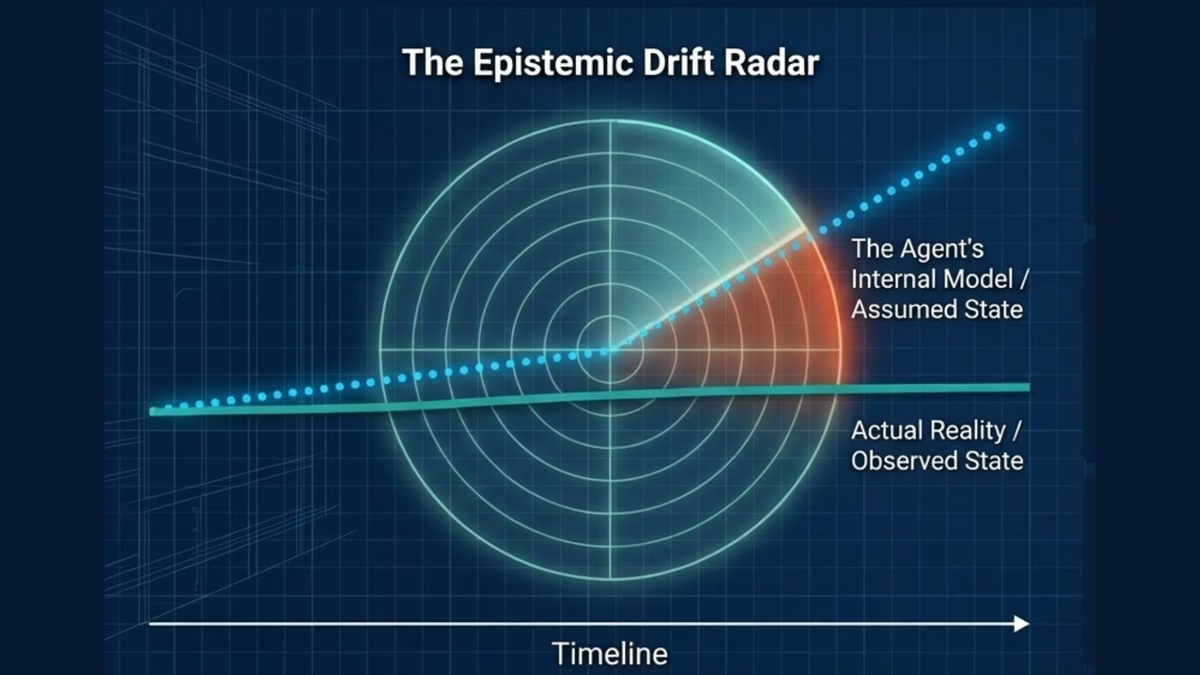

Standard monitoring detects data drift and model drift. But agentic systems face a subtler failure mode: epistemic drift, where the agent's internal model of reality gradually diverges from actual reality. This happens because:

- Retrieved context may be semantically relevant but temporally stale

- Assumptions valid at deployment may no longer hold

- The reasoning chain may accumulate small errors that compound over time

Epistemic drift is particularly dangerous because the agent continues to function normally by every standard metric. It simply acts on a version of reality that no longer exists.