What It Is

A focused 2-day workshop for AI teams, risk teams, and technology leadership on the specific risks introduced by AI agents — autonomous AI systems with tool access and decision-making authority — and the controls MAS expects. This addresses the part of AIRG that most financial institutions are least prepared for.

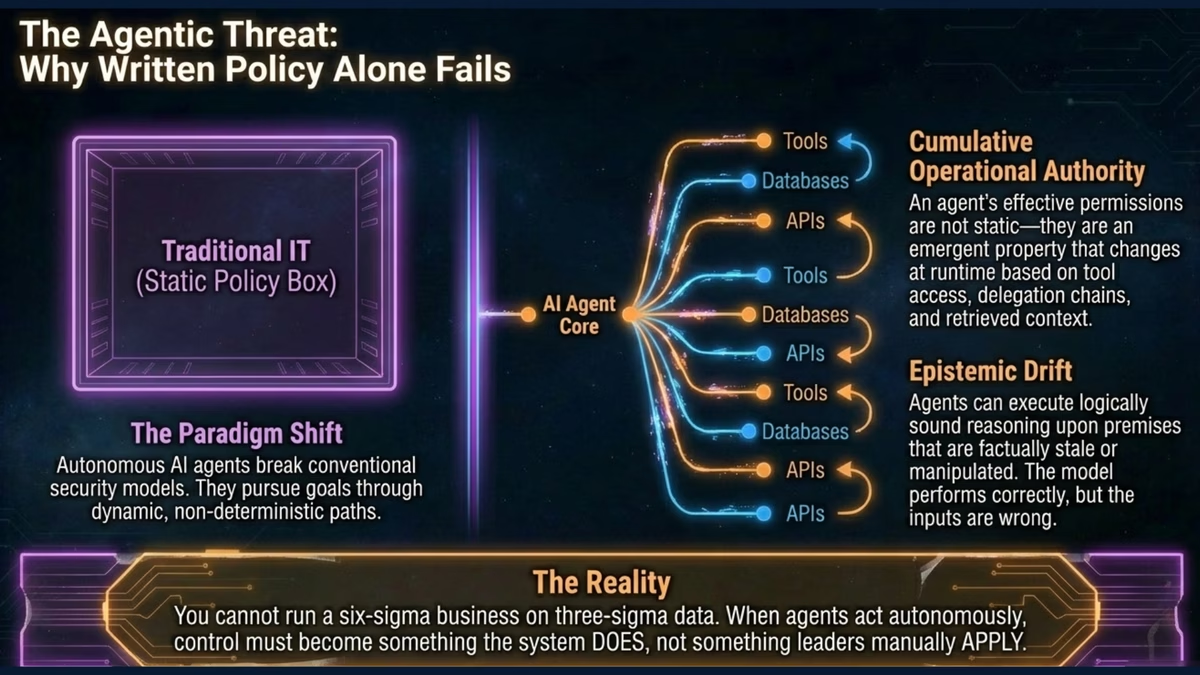

The AIRG explicitly identifies AI agents as introducing heightened risk, recognising that agents with tool access can autonomously execute actions with real-world consequences beyond those of traditional predictive models.