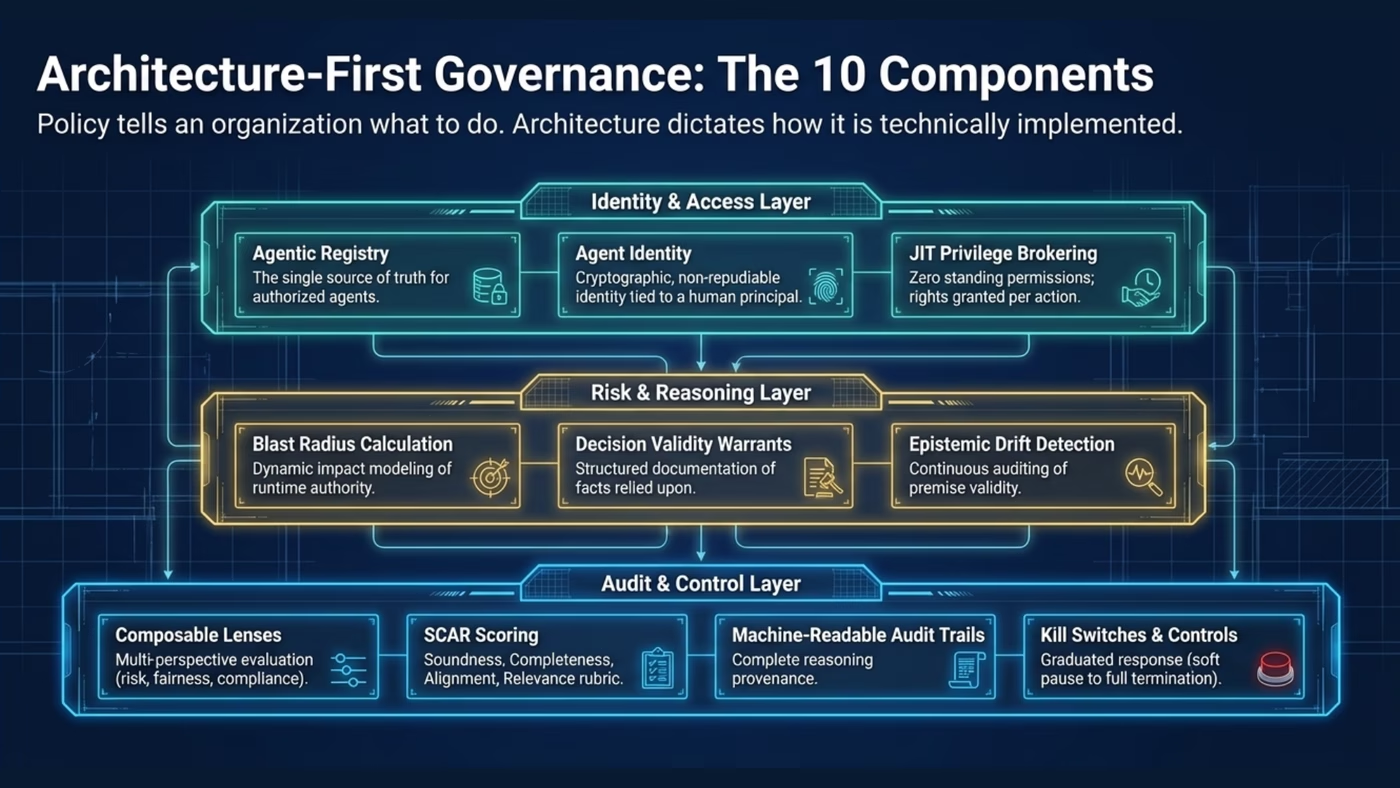

The Ten Components

The Agentic Registry

A purpose-built registry that goes beyond static inventory. Each agent is profiled with declared capabilities, approved operational scope, data access permissions, tool integrations, delegation authorities, and behavioural constraints. The single source of truth for: what agents do we have, what are they authorised to do, and who is accountable for each one? Built on a ten-layer governance data model that captures identity, authority, capabilities, context, and mission in a single versioned record.

Agent Identity

Cryptographic, non-repudiable identity for every agent instance based on standards like SPIFFE. Every action is tied to a specific agent, a specific invocation, and a specific human principal. Identity is short-lived and automatically rotated, eliminating the risk of static secrets and compromised service accounts. A digital birth certificate establishes verifiable origin when an agent is first registered, binding all subsequent artifacts to one subject.

Just-in-Time Security Brokering

Zero standing permissions. For each action, the agent requests the specific privilege it needs with context. A policy engine evaluates the request against approved scope, current risk context, and applicable constraints, granting only minimum privilege for minimum duration. Automatic revocation after task completion, timeout, or risk escalation. This eliminates permission waste — the excess authority that traditional role-based access control inevitably creates.

Blast Radius Calculation

Dynamic modelling of maximum potential impact given current authority, tool access, and data reach. The blast radius changes as the agent's context changes — it is a runtime property, not a deployment-time assessment. Five variants (inherent, static, dynamic, maximum potential, and simulated) provide different views for different governance needs. Feeds directly into AIRG materiality assessment, making it operationally meaningful rather than a one-time checkbox.

Decision Validity Warrants

Structured records documenting the specific facts, assumptions, and data sources relied upon for every significant decision; the temporal validity of each premise; source reliability and information credibility; and the logical chain connecting premises to conclusion. Distinct from statistical confidence — warrants answer the question: is the foundation of this decision sound? Not just "how confident is the model?" but "are the inputs valid?"

Epistemic Drift Detection

Continuous monitoring of premise validity, not just output quality. Maps causal dependencies in reasoning chains, assigns temporal validity windows, monitors for divergence between assumed and observed state, and triggers re-evaluation when key premises are invalidated. Epistemic drift is particularly dangerous because the agent continues to function normally by every standard metric — it simply acts on a version of reality that no longer exists.

Deep dive: Epistemic Drift & Reasoning Validity arrow_forwardComposable Lenses & Points of View

Atomic reasoning perspectives — risk lens, fairness lens, regulatory compliance lens, customer-impact lens — assembled into hierarchical stakeholder views. Veto Lenses enforce non-negotiable constraints: the option disappears before a human needs to reject it, shifting governance from correcting violations to making violations structurally unlikely. Ensures fairness is a structured multi-dimensional assessment, not a single metric.

The SCAR Scoring Rubric

Four-dimensional evaluation of reasoning quality. Soundness: is the logical chain valid? Are inferences justified? Completeness: are all relevant factors considered? Are there gaps? Alignment: does reasoning align with objectives and risk appetite? Does it serve the mission? Relevance: is the agent addressing the right question? Is reasoning contextually appropriate? Each dimension scored 1–5. Below-threshold scores trigger escalation or suspension — an early warning before output quality visibly declines.

Machine-Readable Audit Trails

Complete reasoning provenance for every significant decision: lenses applied, SCAR scores, validity warrant, premises relied upon, alternatives considered, constraints evaluated. Structured data, not prose. Immutable and cryptographically signed. Designed for regulatory inspection, forensic investigation, and automated compliance reporting — satisfying the most stringent audit requirements across MAS, EU AI Act, and US frameworks.

Kill Switches & Emergency Controls

Graduated response framework with four levels: soft pause (complete current action, take no new actions), hard pause (suspend immediately, preserve state for analysis), controlled rollback (reverse completed actions to last known-good state), and full termination (stop all activity, revoke privileges, alert operators). Each with defined trigger conditions, authorisation requirements, and state preservation procedures. Integrates with JIT privilege broker and audit trail.