Every regulatory framework demands that AI systems be accurate, robust, and reliable. But none provides a quantitative methodology for measuring the actual quality level of an agentic process in a way that is comparable across systems, trackable over time, and meaningful to risk and operations executives. Institutions are left with qualitative assessments — “high,” “medium,” “low” — that do not support rigorous risk management.

Lean Six Sigma has been the standard methodology for measuring and improving process quality in manufacturing and financial services for decades. Its core metric, the sigma level, quantifies how many defects a process produces per million opportunities. A 6-sigma process produces 3.4 defects per million. Banking operations typically target 5–6 sigma for critical processes. The methodology is well understood by COOs, CROs, and boards.

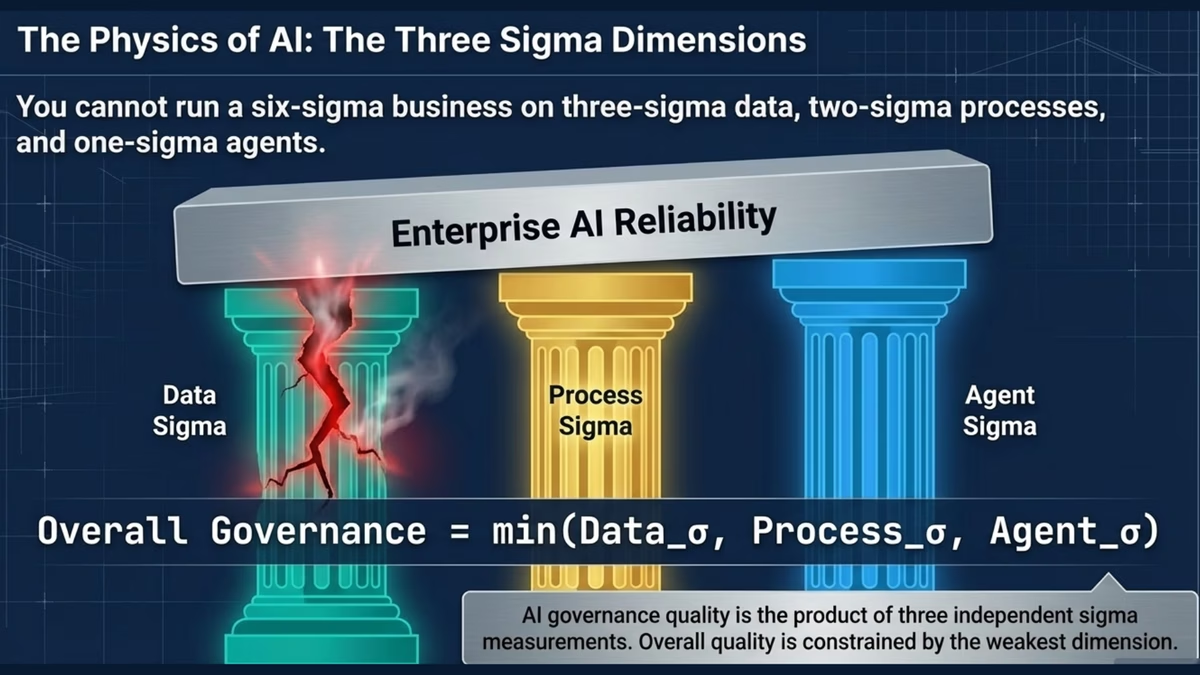

Corvair applies this proven methodology to a domain where it has not yet been systematically used: agentic AI.